3. What is MCTS?: Monte-Carlo Tree Search:

The MCTS is a technique that combines random exploration and search tree to make smart decisions. Unlike Minimax or Alpha-Beta, it does not require an explicit evaluation function and relies on random simulations ("dummy parts") to evaluate the options.

3.1 Key MCTS Steps:

1. Selection: From the root, the algorithm descends into the tree by selecting nodes according to a balance between:

2. Exploitation: Choose the most promising move based on previous simulations.

3. Exploration: Test less explored moves to avoid missing out on an optimal solution.

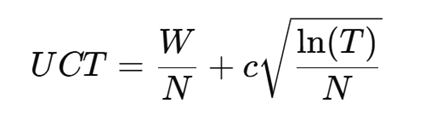

This step often uses a strategy such as UCT (Upper Confidence Bound for Trees), defined by:

Key MCTS Steps:

W: Number of wins from this node.

N: Total number of simulations passed by this node.

T: Total number of simulations in the tree.

C: Factor to adjust the balance between exploration and exploitation.

Expansion: If a selected node has available actions that have not yet been explored, a new node is created to represent one of those actions.

3.2 Simulation:

• From the newly added node, a game is simulated to a terminal state by playing randomly selected moves or via simplified heuristics.

• The objective is to evaluate the quality of this final state (win, lose or draw).

3.1 Backpropagation:

- The results of the simulation are traced through the tree to the root.

- Each node updates its statistics (wins, simulations) based on the result of the simulation.

4. Benefits:

Adaptability: Works in huge state spaces, like the Go (where Minimax is impractical).

Flexibility: Can be applied to problems where rules or assessments are difficult to formalize.

Dynamic increment: The calculation can be interrupted at any time, and the best current move can be played.

Resource efficiency: Focuses simulations on promising branches.